Replacing cron jobs with Jenkins

Problem:

I've got quite a few servers kicking around, in various different locations and usually doing various different things.

Across all of these servers there's always cron jobs set up to do small things here and there - perhaps back something up, clean up some files, hell I even have one to cycle a switch port to avoid a bug / incompatibility with using a Cisco IP phone with FreePBX.

There's a few problems with this approach:

- No single point of management across servers

- No reliable logging without complex syslog setup

- No easy way of knowing if a task succeeded or failed

- No notifications if a failure occurs

Solution:

I've only been playing with Jenkins for less than 24 hours but I already rather like it, and have converted my home infrastructure to use it for job scheduling across linux servers.

Jenkins isn't really designed as a task scheduling server - but it does a rather good job.

You can define each job within Jenkins and use the SSH Plugin to execute a command on a remote server - essentially replacing cron.

Implementation:

Getting Started:

- Of course, everything I run is in Docker these days, and Jenkins is no exception. It's nice and easy to get started using Jenkins in Docker using the following:

docker run -p 8080:8080 -p 50000:50000 -v /your/home:/var/jenkins_home jenkins

Port 8080 is for the web GUI and port 50000 is for a remote java API. I actually leave port 50000 closed because I don't need it.

It's useful to have a persistent storage volume so you can keep data between Jenkins deployments (if you want to update the container etc).

-

Deploy jenkins by following the instructions. You will need the default admin password which is displayed when jenkins starts. You can find this using

docker logson your new container. -

Connect to Jenkins by browsing to your host IP on port 8080. You should be greeted with some standard instructions and set up a user account.

Creating The Job

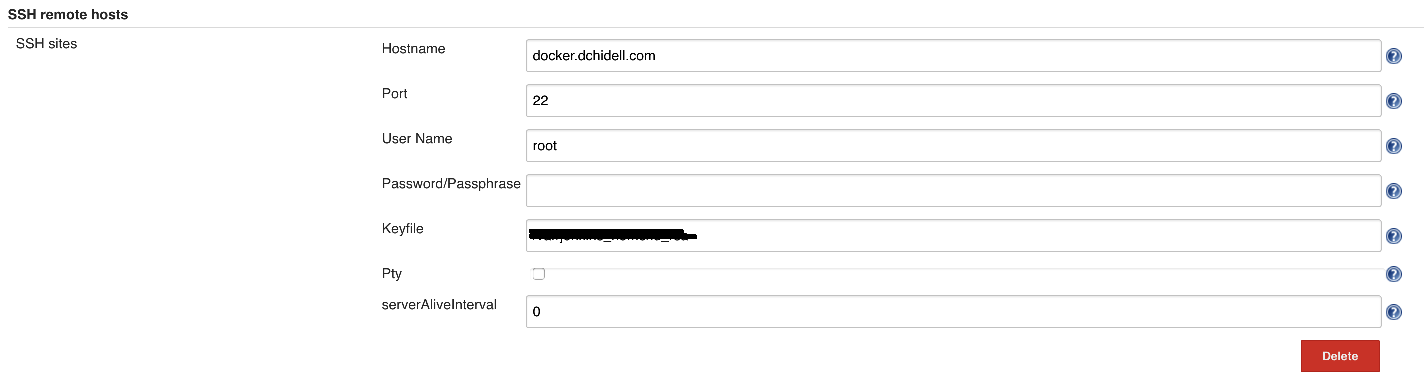

- First we need to actually add details for the hosts we want to run jobs on. This is slightly unintuitive.

Navigate to the Manage Jenkins menu item and then the Configure System option.

There is a menu item for adding SSH hosts under SSH remote hosts. Here is one of the configuration of one of my hosts:

You can use a password or a public key, I'd always recommend using the key simply so you don't end up with a password in your configuration but it's up to you!

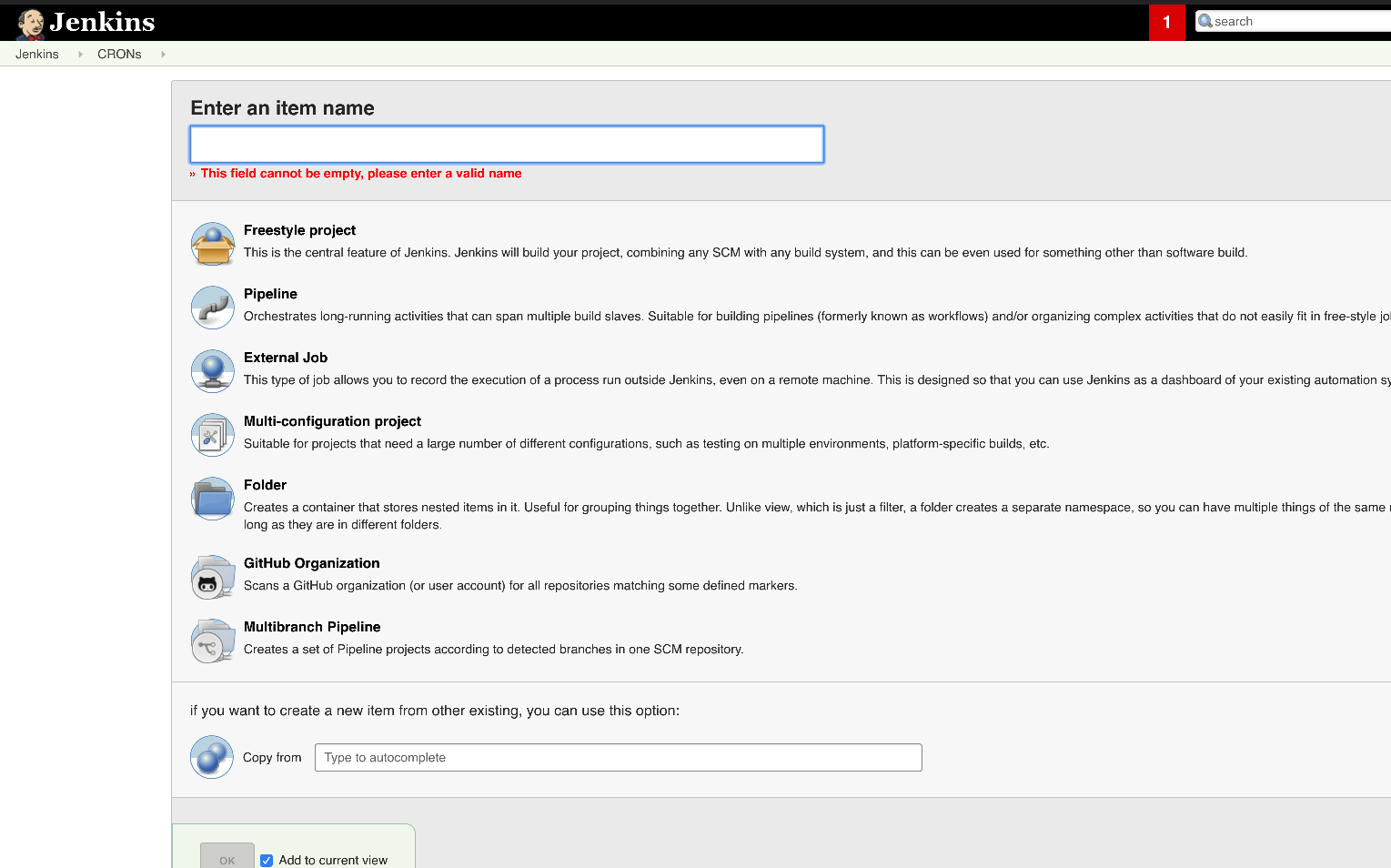

- Now we have our host defined, we can now create the job which will be run on the host. Essentially this is just a list of SSH commands.

Go to the Jenkins main dashboard and select New Item. This will bring up the main page to create a job:

Give the item a name, and select Freestyle project from the list of options. Then press OK.

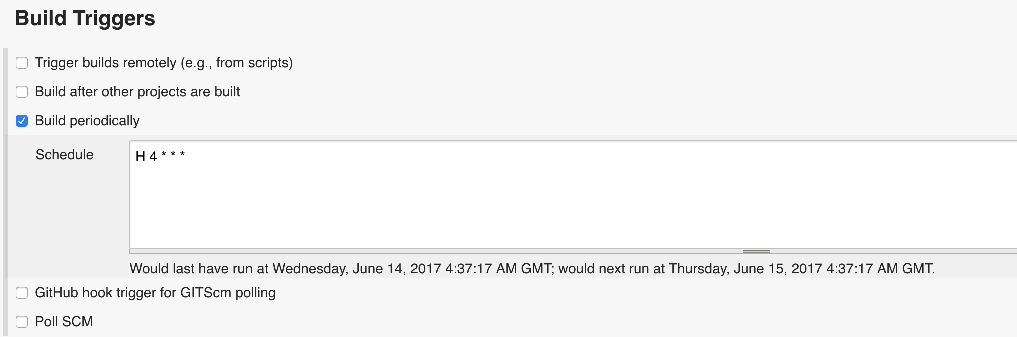

- The next point is to define the job itself, and there are two main components to this. The first is the trigger which will define the conditions under which the job is run. Since we're replacing cron here, we want to run these based on a time condition.

Above is an example of a job configured to run around 4am every day. The syntax is similar to that of cron, however it has an interesting feature with the H character. This represents a fixed random interval which is generated when the job is created but stays fixed for a predictable runtime. This essentially means you can schedule many jobs for around 4am, without them all kicking off at once and burning the CPU.

- Now we have the build trigger configured, we actually need to define what will happen on the job itself.

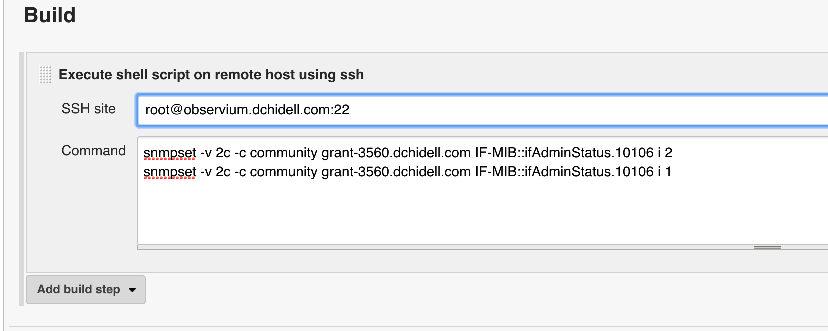

This particular job resets an IP phone by cycling a port on a cisco switch via SNMP every day. Jenkins does not have the SNMP libraries installed by default, so it SSH's to my observium instance which does, then performs snmpset commands:

These jobs can consist of anything, so use your imagination! Most of mine tend to be SSH sessions to a particular host then run some sort of bash command, if you're replacing cron then this is probably similar.

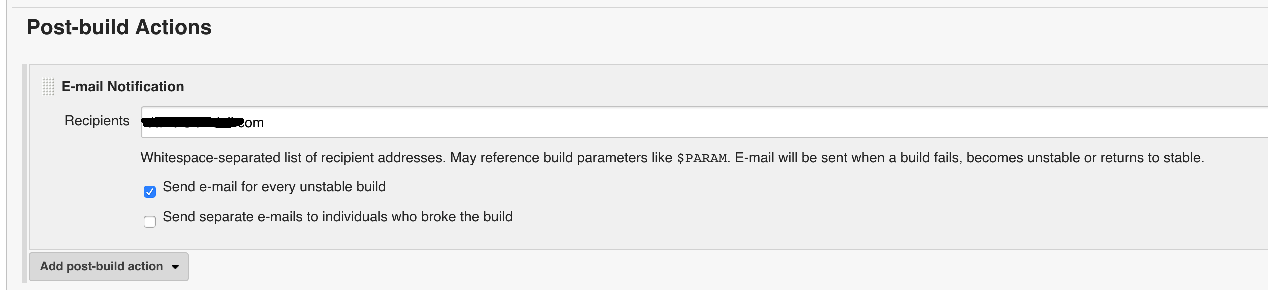

- Reporting! This is one of the best features. Logs are saved for each job but if a job fails you can set jenkins to email you and it'll include the full log output so you can determine exactly what failed. A failure is considered via SSH if any of the executed commands returns a non-zero error code. Sometimes this is not always a failure and indicates some sort of warning condition, however it's up to you.

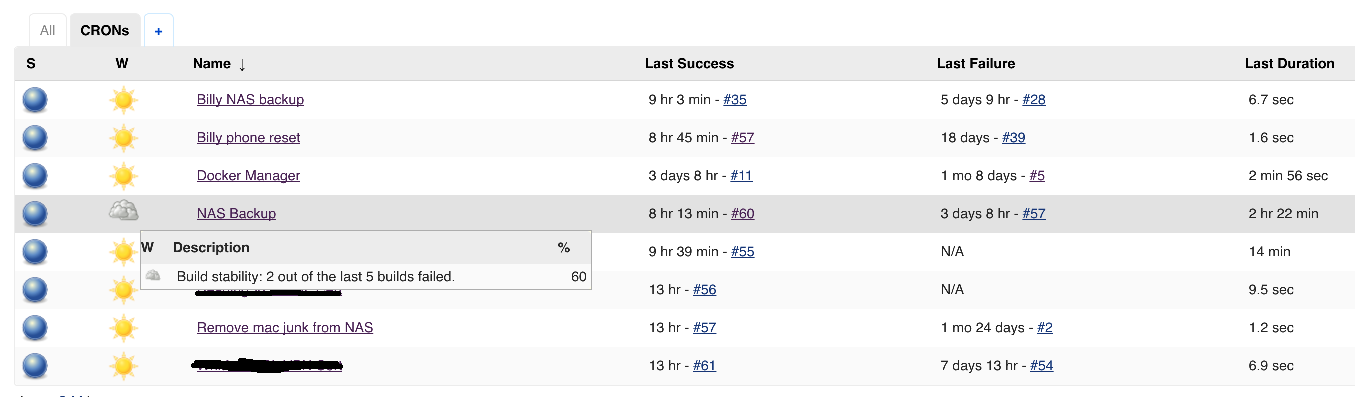

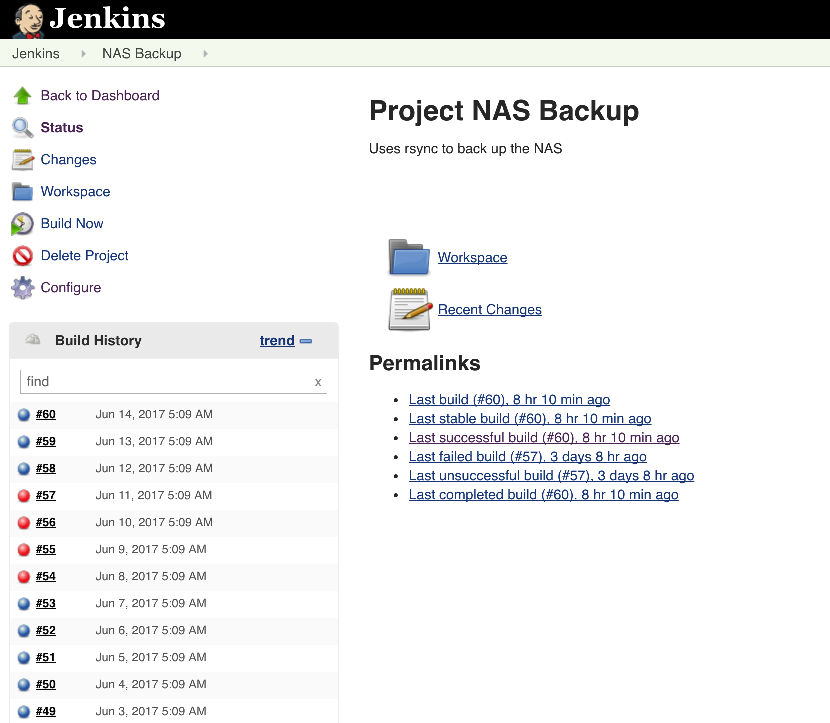

That's it really! Now when our jobs run we'll get a report if it fails via email, and we can easily see the status of various other builds along the way: e.g.:

If we return to the dashboard we get a 'weather report' which indicates how successful the last 5 builds have been. This is great for a quick overview to how your jobs are doing: