The shortcomings of Cisco DNS & NAT

Introduction:

Usually these posts are centered around solving a problem - and this is no exception, however I'm going to rant a little more than usual as the experience with DNS and NAT in my particular situation has been particularly painful in the last few days!

Problem:

I've got a pretty complex home network. There's all sorts going on. One main aspect is I host various services. These services are mostly internal and for my own benefit, but others (such as this blog) are externally facing.

The issue then lies in how I access these services internally, vs external access. It sounds like a simple problem, and it is. The solution however is a little less simple.

I make use of split-DNS. I have a trio of windows servers spread across 3 locations via DMVPN which run AD as well as DNS. AD is used primarily for authentication and DNS is well, for DNS.

These servers are VMs, and with my growing shift to microservice applications using Docker they go against the shifting nature of my infrastructure. I wanted to remove them. Authentication is easy - I've just moved to local authentication for the services using AD. I may look into OpenLDAP in future, but that's another discussion. DNS however is the problem.

DNS:

I use split-DNS. The windows DNS servers internally handle everything relating to my dchidell.com domain. Any lookups internally are handled by those DNS servers. Any other lookups are simply forwarded to OpenDNS as a forwarder.

This works very well - I can have a record for blog.dchidell.com for example, which points to the internal docker host within my network. When my PC does a DNS lookup for it, the windows machine will return the DNS entry for that local machine. External entries are managed by an external provider, and in that instance blog.dchidell.com points towards my public IP address, which is then port-forwarded within my router (you may see where NAT is coming into this!).

This setup works great - internal users can access resources via my DNS servers, and external users hit the external DNS servers. But how do I replicate this without the windows machines?

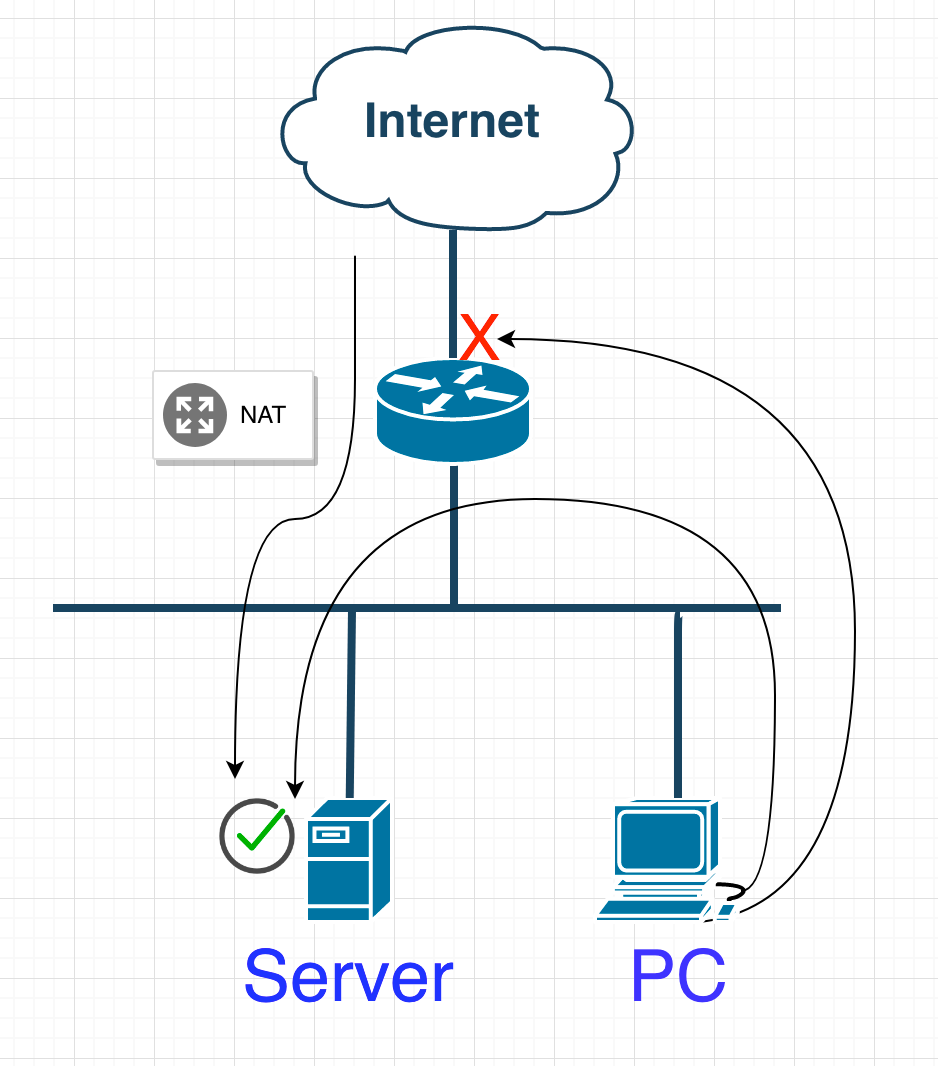

Well, let's look at why I even need to use split-DNS and have a DNS server internally. The problem comes down to NAT. It's very simple, if I have no internal DNS, my blog.dchidell.com record would resolve to my public IP address. Internal access then fails, as requests to my public IP from inside my network are not translated in NAT. This is known as NAT hairpinning and it's a bit nasty. Alternatively with an internal DNS, the record returns the private IP within the network and allows it to traverse successfully. My poor attempt at a diagram illustrating this is below.

So, this takes us towards NAT - perhaps we can solve this by changing some of the NAT configuration and punting anything internal back into the network.

NAT:

As I mentioned before, this is NAT hairpinning. Usually it's a pretty nasty solution, but it would actually be fairly elegant in my situation, it would allow me to remove all internal DNS servers.

I didn't have much luck here. One solution I found was to use a different NAT mechanism called NAT NVI. I'm using an IOS XE based router - and it doesn't support this mechanism! A document detailing NAT NVI can be found here: https://community.cisco.com/legacyfs/online/legacy/0/8/0/60080-NAT Virtual Interface.pdf

Since NAT NVI was unsupported, I resorted to tinkering away adding NAT entries I thought may work, I even tried PBR. All of my attempts failed, to the point where I then broke my router and had to reboot it to solve the problem. Pity for me that it's got a built in switch which then took down storage between my NAS and server, causing a whole host of problems! By 3:30am I threw in the towel, ripped the power cable out of the server and collapsed into bed. Not the best evening.

Back to DNS:

The following day, the problem was still no closer to being solved. After fixing the issues created from my NAT tinkering I decided that I was going to need an internal DNS server after all. The question was what to use.

I did not want to use my trio of AD servers. I looked into using the router itself but it was incapable of holding any CNAME records and wasn't capable of wildcard entries! I use wildcard entries as I'm lazy, and even internal services must still resolve their DNS records externally as most of them are HTTP based and LetsEncrypt needs to get in to validate ownership of the domain.

I looked into running dnsmasq as a docker container, and did so for a few hours. This worked for a time, until other tinkering meant that the container & image was deleted, but the docker host was pointing towards it for DNS resolution and created a recursive mess of failure. Best not have a service running on a platform which depends on the service you're running on it. It's a really bad idea.

The Solution:

By now I was feeling pretty defeated, NAT had failed me (and screwed me over in the process). DNS on a Cisco router had failed me. Even docker had failed me.

I then thought about pi-hole. A DNS server designed to run on a raspberry pi which has some great functionality of blocking advertising websites. I figured that if I actually gained some additional functionality I could justify the wasted time and effort to myself.

I set up a brand new VM dedicated to pi-hole (as I didn't have a pi handy). Installation was easy and pi-hole was running in minutes. Now all I had to do was configure some custom DNS entries and we're away! Oh but wait. It doesn't support adding DNS entries via the GUI - argh.

However, I then found a handy feature. You can delegate DNS lookups for a specific domain to another DNS server. I rebuilt my docker container and set up the records for dchidell.com and pointed pi-hole towards it. I could have played with the config files for pi-hole.

Now we had a solution. Pi-hole would be responsible for all internet facing DNS lookups. Any internal lookups pi-hole would send towards my separate DNS server, perfect!

The only caveats is we now again have a single point of failure. In fact we have two single points of failure! The VM running pi-hole, and the docker container running the internal DNS for dchidell.com. Time to solve that.

I bought a pi3 and plan to run pi-hole and dnsmasq both as docker containers (it's not arrived yet). These can then talk to each other and I can expose port 53 for DNS outbound and then set both the pi and the pi-hole VM as DNS servers for my network. The disadvantage here is I have to maintain two configurations - a problem I will solve later, probably with a jenkins job syncing the configuration files for dnsmasq, pi-hole I can't imagine will change much!

Edit: Well, not an edit. I just never published this when I wrote it. I now maintain the dnsmasq configuration for the pi-hole machines via a gitlab CICD mechanism. I'd write a whole article on it, but this one has sat in the unpublished section for too long. Here's what I'm using:

piholes=("ipaddr_1" "ipaddr_2" "ipaddr_n")

for i in "${piholes[@]}"

do

ssh $SSH_OPTIONS root@$i 'printf "addn-hosts=/etc/pihole/lan.list\naddress=/dchidell.com/<wildcard address>" | tee /etc/dnsmasq.d/02-lan.conf'

scp $SSH_OPTIONS lan.list root@$i:/etc/pihole/lan.list

ssh $SSH_OPTIONS root@$i 'pihole restartdns'

done

Conclusion:

Avoid a Cisco router as a DNS server, unless you want pure forwarding functionality.

Avoid trying to use a Cisco box to get internal IP addressing to honour external NAT port-forwarding (it may be possible and I just don't know how).

Pi-hole is pretty awesome - but not quite as feature rich as I'd like.

DNS can screw you over - sometimes a VM is better than a container!